Graduate work from Umeå Institute of Design’s MFA In Interaction Design from 2015-2018

“The MFA in Interaction Design deals with the relation between people's behaviour and the behaviour of products and services. An interaction designer gives shape to that relation, bringing cognitive, emotional and physical aspects together in a successful whole.” – Umeå Institute of Design

I had the pleasure of attending the prestigious Umeå Institute of Designs MFA program in interaction design where I focused on experience making through physical prototyping as well as telling the narrative that drive why of the design.

The following is a selection of some of the prototypes, proof of concepts and interaction designs that I created or collaborated on during my two years at UID.

Aven Avalanche Beacon / Sound Design, Product Design

Bolidan Rockbreaker User Interface / Semi Autonomous UI, Mobile Device

Eye tracking User Interface for Rockbreakers / Eye Tracking UI

A History of Neural Interfaces / Faceless Interaction, Design

Democratizing Our Data: MFA Thesis Dissertation / Physical Prototyping, Fluid Assemblages

Koala Conversational Bus Kiosk / Conversational UI

-

Aven

Sound Design For Safety Equipment

A two week collaborative project with UID Masters Of Advanced Product Design focusing on the use of sound design for avalanche beacons, radio transceivers used to find people or equipment buried under snow.

Design Team:

Jakob Dawod, Thomas Helmer, Marc Saboya Feliu

Aven Avalanche Beacon Product Demo

Aven is a life saving helmet mounted safety product that enhances the current functionality of avalanche beacon by providing 360 degrees of sound as well as directional lights to guide user to a victim. By using hands free navigation the user can focus on locating those buried by doing tasks such as probing and digging for victims.

As part of the standard design process our team went through a number of steps in the creation of this prototype beginning with low fidelity prototyping, concept sketches, literature review. Below are examples from further in the design process where built a 3d model with coded light and sound. This allowed us to take the Aven prototype “out into the wild” for some scenario based user testing.

Various stages of the coded sound and physical prototyping process

Final Conceptualization:

After the profiles were looking consistent with our conceptualizations, I utilized Arduino's serial communication with processing to allow me to map each sound and colour profile to a keyboard key and mounted the lighting to the internal portion of our mounted prototype.

Prototyping Process:

With the concept for the 3D model under way I used a megapixel LED's controlled by a potentiometer and Arduino to test various colour profiles and blinking patterns cycle. This allowed me to fine tune the detail of each colour and sound frequency to mimic sonar.

Wizard Of Oz Testing:

As a fully functional prototype with a wireless transceiver / receiver set up was not possible, we used the manually controlled sound and colour profiles to perform a wizard of oz test. Users we’re guided to a pre-selected unmarked location within the testing room.

-

Bolidan Mining Rock Breaker User Interface

Utilizing Semi Automation For Rock Breaker Operators

We explored the context in which remote rock breaker interfaces were used in Boliden mine operations. Operators suffer from ergonomic concerns and reduced efficiency due to screen based operation.

Design Team:

Yi Ting Chien, Siddharth Hirwani, Hector Mejia

Partners:

ABB, Bolidan, ICT

Operating Multiple Rock Breakers Scenario:

In this video we wanted to a scenario where one operator could control rock breakers at two different sites using our interface. Here we wanted to illustrate how operation of multiple rock breakers becomes easier through the minimizing of manual interactions required to get a rock breaker arm into position before even beginning rock breaking.

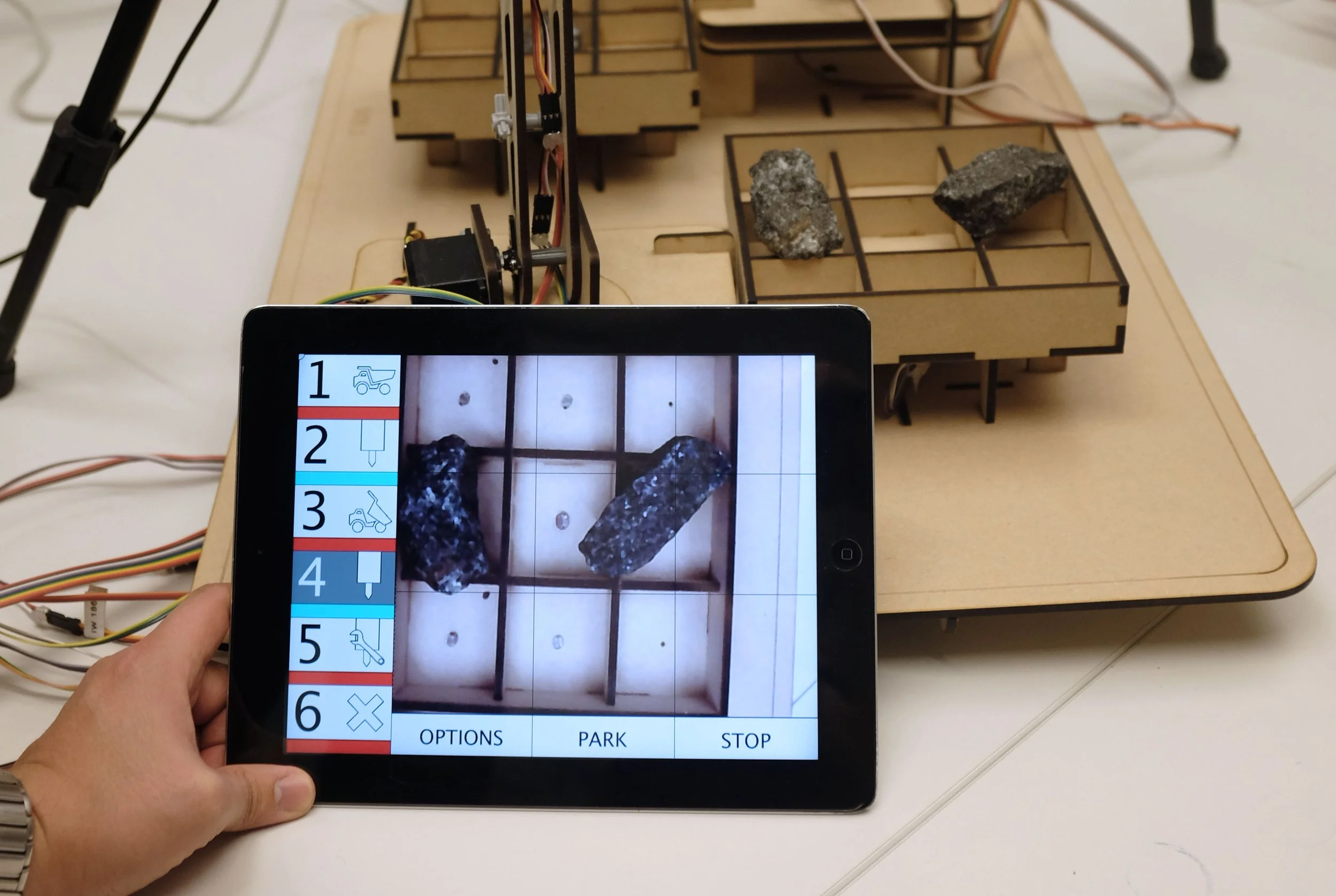

Our final prototype showed how two simulated rock breaker arms made out of MDF board could be controlled using sensors instead of manual input. When a rock is placed onto our models grizzly (the large sieve which determines which rocks that still need to be broken) the rock breaker arm will move toward the rock. The prototype helped illustrate our pitch concept that computer vision could be leveraged for arm movements potentiall reducing wear and tear caused by human error while also reducing the time it takes to position the rock breaker manually. A secondary mode was also included in our prototype where the rock breaker arm is moved by selecting a grid based co-ordinate displayed through an iPad interface.

To extend our final design concept into the real world we role played scenarios where a remote operator could control of multiple rock breakers spread throughout the mine.

Physical and Semi Autonomous Digital User Interface Prototypes

Initial Prototyping:

In order to simulate the best possible experience my teammates assembled a working rock breaker arm. This allowed us to get a feeling for movement in real time.

Final Prototype UI Demo:

The user drives the rock breaker arm by first selecting an arm on the touch pad and than selecting a a location on the grizzly which displays rocks that require breaking. When the user is done the manual breaking process they can return the arm back to a safe location by touch of a button on the pad instead of having to manually move it.

Sharing the Final Concept With Bolidan Stakeholders:

The final concept was well recieved by our stakeholders at Bolidan which we’re part of their product and design team and we hope they take our insights into consideration when transitioning from a manually controlled process mining process into one that is fully automated.

-

Eye Tracking Rock Breaker Interface

Interaction and User Interface Exploration

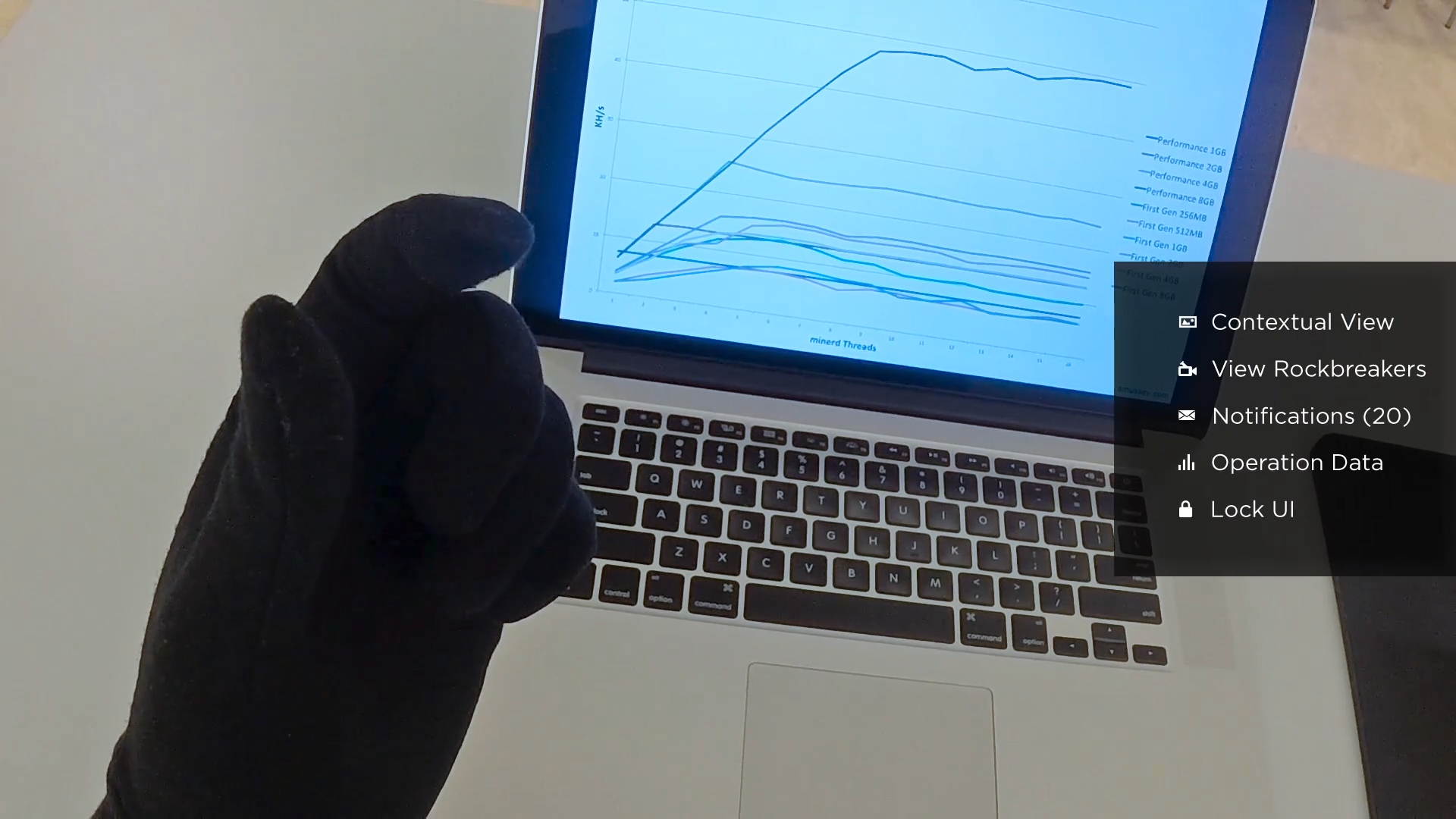

A two day prototype exploration asking what would a remote rock breaker interface look like if it used eye tracking with a heads up display as it's core interaction elements.

Prototype for an eye tracking interface used to control remote rock breakers.

I utilized the scenario where an operator is monitoring multiple rock breakers to provide a context for design. The final solution also uses finger gestures through a special glove used to make selections and navigate the interface's information architecture.

Music: https://www.bensound.com

-

A History Of Neural Interfaces

Faceless Interaction & Design Fiction

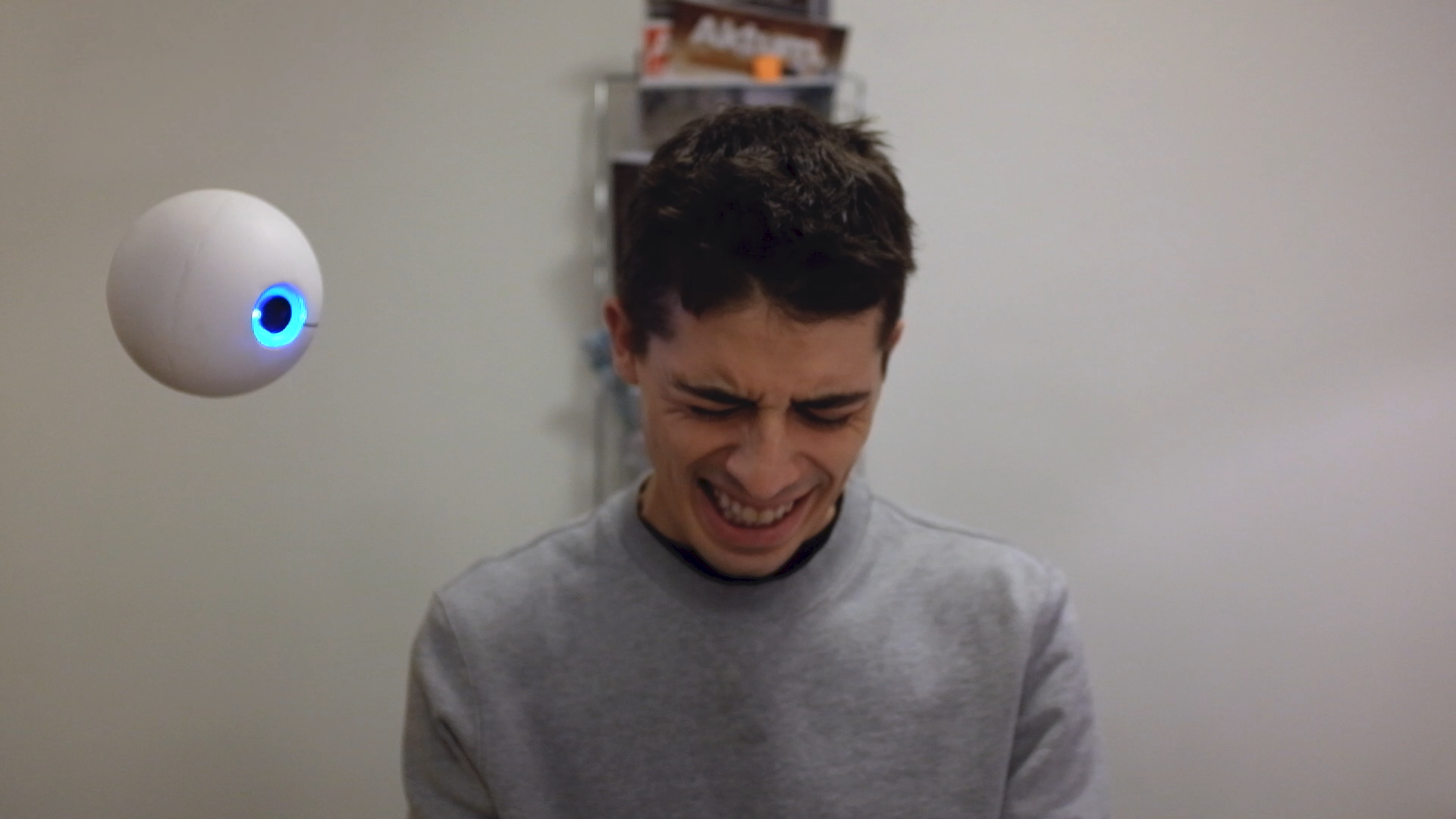

A History Of Neural Interfaces is a design fiction that explores a future vision of 2038 where cyborgs have become common in society. Connected by neural interfaces, syringe injected technology which allows them to interact with certain "IoT" connected objects that have been designed to function with the neural implant.

External Tutor:

Bastien Kespern, Antiped

A perfect day in the life of a cyborg named Dave – Design fiction diagetic prototype

Our design fiction project was conceived to give students a chance to focus on solving for problems in an intangible future where an infinite number of possibilities exist. One other caveat required the project to be “faceless” in that no screen based interactions could be used. After selecting my interaction medium which would be through neural interactions or interactions that take place through the use of though, I entered an extensive research and prototyping phase where I began exploring what a world with neural interfacing could look like. After developing a number of viable prototypes I needed a framework for engaging people in the material I had created so far to begin gathering user based insights and feedback.

After discussion with my project advisors it was decided that using a speculative design workshop where participants would view an interactive timeline starting utilizing the prototypes I created would be an excellent way to facilitate discussion.

Specific gaps would be intentionally left openwhich would allow participants to formulate and present ideas on what might have to occur between 2018 and 2038 for this timeline to become a reality.

I facilitated 4 hour speculative design workshop with 6 medical doctors in Umeå and collected the final results in the form of sticky notes, and video conversation.

Every participant generated post-it note helps formulate a perspective on how the future 2038 came to while simultaneously generating new potential futures through alternate timelines. Lastly this workshop can be repeated by either using the previously populated timeline or starting with a fresh timeline and than comparing timelines to gain insight into how we might preemptively course correct future timelines through present day design.

Without an existing neural interface to test with I had to get creative with ways of prototyping to explore the modality.

Turning On A Light Switch Via Neural Interface:

This prototype conceptualizes how a neural interface could be used to turn on a light source. Since there are no visible interactions taking place it raises interesting questions on how design works in this context. Later this was turned into a diegetic as it proved to be an excellent reference for workshop participants.

Shared Neural Interfacing Experience:

A user test where two people attempt to turn on a light switch using their neural interfaces. Complications arise because there are no physical cues defining who will be the one to turn the light switch on. In this prototype the neural interface is subsituted with a simple rocker switch hidden below the table so the other person cannot see it.

Weak Signals Participatory Design Workshop:

A number of Umeå University medical students were recruited to join a participatory design workshop where present day literature and news articles we’re discussed as weak signals that could help inform a discussion about the future ramifications of cyborgs and neural interfaces on society. The information was than catalogued in the form of a timeline created by the participants.

-

Democratizing Our Data

An Umeå in MFA Interaction Design thesis exploring how humanity will live in a world where data is or isn’t controlled.

UID Closing Thesis Presentation Class of 2018

The 2018 scandal where Cambridge Analytica tampered with U.S. elections using targeted ad campaigns driven by illicitly collected Facebook data has shown us that there consequences of living in a world of technology driven by data.

Mark Zuckerberg recently took part in a congressional hearing making the topic of controlling data an important discussion at even the highest level of the government. Alternatively we can also recognize the benefits that data has in terms of technology and services that are highly personalized because of data. There’s nothing better than a targeted ad that appears at just the right time when you need to make a purchase or when Spotify provides you with the perfect playlist for a Friday night.

This leaves us torn between opposites; To reject data and abandon our technology returning to the proverbial stone age, or to accept being online all the time monitored by a vast network of sensors that feed data into algorithms that may know more about our habits then we do. It is the friction of these polar opposites that will lead us on a journey to find balance between the benefits and negatives of having data as part of our everyday lives.”

To help explore the negatives and positives on this journey I developed Data Control Box, a product that ask the question “How would you live in a world where you can control your data?” Found in homes and workplaces, it allows individuals or groups of people to control their data by placing their mobile devices into it’s 14x22.5x15 cm acrylic container. Data Control Box limits personal data production through a physical barrier to it’s user prior to it’s creation. This physical embodiment of data control disrupts everyday habits when using a mobile device, which in turn of a creates the opportunity for reflection and questioning on what control of data is and how it works. For example a person using Data Control Box can still create data using a personal computer despite having placed their mobile device inside Data Control Box. Being faced with this realization reveals aspects of the larger systems that might not have been as apparent without Data Control Box and can serve as a starting point to answering the question “How would you live in a world where you can control your data.”

Installation of Data Control Box at a participants desk.

To further build on this discussion people using Data Control Box are encouraged to share their reflections by tweeting to the hashtag #DataControlBox. These tweets are displayed through Data Control Box’s 1.5 inch OLED breakout board connected to an Arduino micro-controller. Data Control Box can interface with any network connected computer using a usb cord which also serves as a power source.

The connected feature of Data Control Box allows units found around the world to become nodes in a real time discussion about the balance of data as a part of everyday life, but also serves as a collection of discussions that took place over time starting May of 2018. As a designer, the deployment of Data Control Box allowed me to probe the lives of real people and to see how they might interact with Data Control Box but also their data in a day to day setting.A total of fifteen people interacted with Data Control Box following a single protocol that was read aloud to them beforehand.

Collecting feedback on the User Interface.

A participant uses Data Control Box for an extended period during an overnight test.

A number of different contexts for the deployment of Data Control Box we’re explored such as at home, on a desk at school and during a two hour human computer lecture. I collected a variety of qualitative research in the form of photos and informal video interviews during these deployments which I synthesized into the following insights that can be used by designers when considering how to design for the control of data but also how to design for complex subjects likedata. My thesis disseration retraces my arrival at this final prototype sharing the findings of my initial research collected during desk research, initial participant activities, and creation of my initial prototype Data Box /01. It then closes with a deeper dive into the design rationale and process when building my final prototype Data Control Box.

-

Koala CUI Automated Bus Kiosk:

Koala CUI AutomatedBus Kiosk:

A 10 week collaborative project with Microsoft exploring Conversational User Interfaces (CUI's) for a service context.

Design Team:

Melissa Hellmund, Eduardo Pereira, Christoph Zobl

Koala Conversation UI Product Demo

As our team did not have access to the complex language libraries such as those used in Siri or Cortana, we opted to use a wizard of oz prototype to build a bus ticket kiosk CUI. We took this prototype out into the real world for guerilla usability testing which resulted in us formulating these CUI design principles

Physical Presence of the CUI: The design of the CUI language and physical kiosk should inform users that the CUI is awareness of the user's presence. We addressed this in our design by having the kiosk light up when a user enters an acceptable interaction distance followed by a verbal greeting.

Navigation Alternation Between The User and the CUI: The design of the CUI and the physical kiosk should help create a speaking order between the human and the CUI. This is similar to the idea of radiotelephone communication words such as "over."

We addressed this principle by using a "breathing" light informing the user the CUI is waiting to receive an input.Conversational System Limitations: We intentionally designed the language used by our CUI to guide and limit the system offerings. This helps the users develop a mental model of the overall system which can be useful in future interactions.

User Centered Contextual Awareness: The CUI should be programmed to deal with contextually relevant problems such as where the closest bathroom is.

Distinguishing Between Human to Human Interactions and Human to CUI Interactions: The users should be aware they are speaking to a CUI and not a human because it will affect how they treat the system. While not immediately relevant now the better CUI's get with emulating human speech the more this principle will matter.